Flickr is full of photos taken around the world. We’ve seen how these photos can be visualized to understand the world around us: what cameras people use, when do people take photos, and how people describe and tag photos around the world. But can we actually see the world from the lens of the photos?

Last year, Flickr and Yahoo Labs worked on finding points-of-interest by looking at the compass information stored in the photos alongside the GPS data. It turns out, for many monuments, patterns emerge due to how the park or building has been designed. For example, at the Taj Mahal, you stand at the top of the reflecting pool and take this photo:

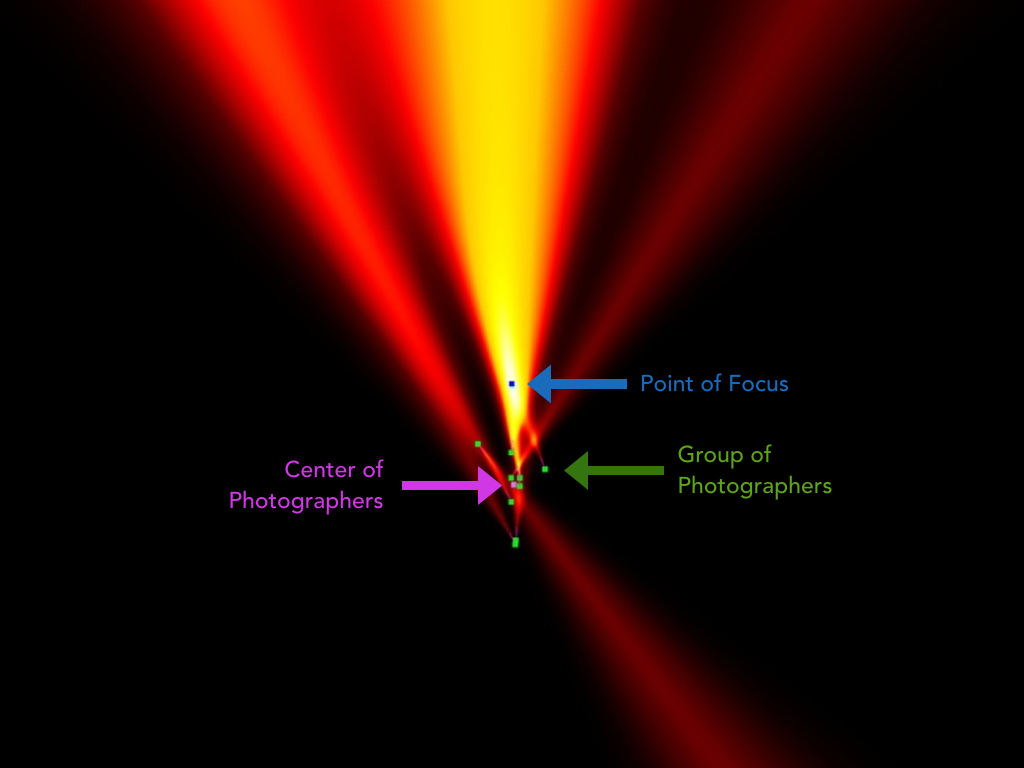

Not everyone takes that photo at the Taj Mahal, but most do. We can plot the distribution of photographers and camera angles as a heat map and we’ll see the Taj Mahal appear in white; this is done by using GPS and compass data in all the public photos.

Recently, scientists in the Department of Computer Science at The University of North Carolina at Chapel Hill (UNC) proposed a streamlined method for 3D reconstruction using a single laptop. We were intrigued and ran their method; some computer vision techniques can be used to align, such as in the example case below, 1,300 photos into a single projection.

And then, one can compute where each ‘point’ of intersection is between several photos and make a 3D model.

This is an interactive 3D model we reconstructed by analyzing thousands of public Flickr Creative Commons photos. There’s quite a bit of detail (but unfortunately not enough data to print a full 3D sculpture because it’s not so easy to take a photo of the back of the Taj Mahal). Here at Flickr we spent some time — almost melting our laptops — to create other models of American monuments like the Lincoln Memorial.

You can notice in this dense reconstruction the view point of all the cameras, wether or not they focused on the text or the statue, and even what filters some were using. The statue is a 3D reconstruction based on all the projection points and textures of all the photos.

The Statue of Liberty also rendered quite well, but it had a bit ‘ghosting’ of the torch.

The UNC folks also made a great video that shows you the depth and fidelity of the reconstructions of the world one can create.

[youtube https://www.youtube.com/watch?v=bRYqyoqUJuM?rel=0&showinfo=0&w=640&h=480%5D

UNC used our YFCC100M dataset and we’re very excited to see people using Flickr’s photos in innovative and scientific ways.